Vol. 11 No. 3, April 2006

| Vol. 11 No. 3, April 2006 |

||||

Bo-Christer Björk | and |

Žiga Turk |

Introduction This case study is based on the experiences with the Electronic Journal of Information Technology in Construction (ITcon), founded in 1995.

Development This journal is an example of a particular category of open access journals, which use neither author charges nor subscriptions to finance their operations, but rely largely on unpaid voluntary work in the spirit of the open source movement. The journal has, after some initial struggle, survived its first decade and is now established as one of half-a-dozen peer reviewed journals in its field.

Operations The journal publishes articles as they become ready, but creates virtual issues through alerting messages to “subscribers”. It has also started to publish special issues, since this helps in attracting submissions, and also helps in sharing the work-load of review management. From the start the journal adopted a rather traditional layout of the articles. After the first few years the HTML version was dropped and papers are only published in PDF format.

Performance The journal has recently been benchmarked against the competing journals in its field. Its acceptance rate of 53% is slightly higher and its average turnaround time of seven months almost a year faster compared to those journals in the sample for which data could be obtained. The server log files for the past three years have also been studied.

Conclusions Our overall experience demonstrates that it is possible to publish this type of OA journal, with a yearly publishing volume equal to a quarterly journal and involving the processing of some fifty submissions a year, using a networked volunteer-based organization.

The electronic open access journal as a phenomenon emerged in the mid-1990s, enabled by the rapid development of the Internet. Open access means that scientific publications are made totally freely available on the Web, without any access restrictions. The open access movement was based on a realisation that the traditional subscription-based publishing model unnecessarily restricts access to research results, in a field which essentially is a public good. Most of the early open access journals were founded by single academics or groups of academics at a time when traditional subscription-based journals were still published on paper only. Thus, open access journals not only offered free availability of the articles, they also pioneered the use of the electronic medium.

Since those early days the whole scholarly publishing field has changed dramatically. Almost all major journals are also available in an electronic format, often offered to universities as big package deals, bundling huge numbers of titles from a single publisher. New, professionally managed, open access publishers (for exampel, BioMedCentral and Public Library of Science) have also entered the market, which finance their operations through author payments. Many big scientific publishers are experimenting with single open access journals or a hybrid form called open choice. Open choice gives authors the possibility of having their papers made openly available in exchange for payment of a basic fee. At the same time there are many advocates of open access who believe that scholars should continue to publish their articles in traditional subscription-based journals but should at the same time upload open access copies of the papers to subject-based or institutional e-print repositories (Harnad et al. 2004). This alternative mode of open access is often referred to as the green route as opposed to the gold route of the journals themselves being open access. Currently, perhaps 4% of scholarly journal titles and 1-2% of articles are directly published as open access, whereas it is estimated that some 10-20% or all scientific articles can be found openly in full text on the Web in some version, mostly as copies in e-print repositories or on the home pages of the authors (Harnad et al. 2004).

Having outlined the broad picture of the development of open access over the last ten years, this case study concentrates on the practical experience of setting up and running of the Electronic Journal of Information Technology in Construction (ITcon). The journal is now more than ten years old and is representative of the type of open access journal which is managed without a formal budget, employed staff and author payments, in the same spirit as many of the open source software projects. In addition to reporting on the hands-on experiences, the development of a benchmarking method for scientific journals, and its application to the case of ITcon is also reported. It is hoped that the sharing of experiences of this type of publishing could be of assistance for others in the process of initiating or running similar journals.

The study of the application of information and communication technology in construction (architecture and civil engineering) is a relatively young field, with community-forming events going back to the 1970s and the first dedicated peer-reviewed journals to the mid-1980s. Researchers study areas such as the computer-aided design of buildings, project management using IT-tools, applications of IT to logistics etc. Currently, there are seven peer-reviewed journals specialising in the subject, two of which are open access, two society-published, subscription-based journals and three that are purely commercial. In 2004 these journals published 235 articles. Since the average acceptance rate is in the order of 40-50% this means that there about 500 submissions. In addition to publishing in these specialised journals, scholars in this field can also publish in construction management journals and in computer science and IT-specific journals. Since the technology develops rapidly, the topic is by nature such that it is important to get research results out quickly. Also, some of the papers could potentially obtain many readers from among practicing architects and civil engineers, if they were easily available.

ITcon has from the start been managed by a group of four editors, two of whom have carried the major work burden and are the co-authors of this paper. The editor-in-chief (and first author of this paper) has led the work with the journal, negotiated with editors of guest issues and has managed a substantial part of incoming manuscripts through the review process. One co-editor (and co-author of this paper) has had, in particular, responsibility for creating the Web platform on which the journal operates. The Web site has from the beginning resided on the servers of his department. The other two co-editors have taken part in policy-setting discussions and have also managed a share of the submissions.

Initially ITcon got a grant of some 30,000 Euros from the Swedish Council of Building Research. This helped in some promotional activities, such as printing paper versions of the first four year's volumes, used for free promotional distribution at conferences etc. The journal could have been established also without this grant, since the time input by the editors and the computing costs are seen as an essential part of academic work and are absorbed by their universities.

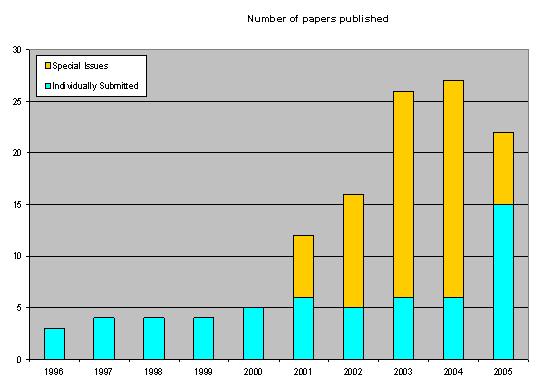

The number of papers published by ITcon over the years is shown in Figure 1.

As can be seen from the figure, the first five years of the journal were rather difficult in terms of attracting enough good submissions. The editors rather naively assumed that a good service and wide readership would promote the journal. They underestimated the fierce competition for the manuscripts in this area as well as the high priority that academics place on publishing in journals that are established on the shortlist of their academic or funding agency's evaluation committees or indexed by institutions such as the ISI.

The decision to also start publishing special issues, which was triggered by a proposal from one of the editorial board members in 2001, proved to be a turning point, as clearly seen in the publication statistics. First, our experience is that it is easier to get potential authors to submit to special issues than as individual submissions. They value the better exposure that they get in a special issue and perhaps also the clearer publication schedule and promise of more specialised reviewers. Guest editors of special issue also have good networks which help in attracting submissions. Secondly, publishing in the form of special issues relieves the editors of some of the burden of assigning and managing reviews. Finally, based on the publication figures of 2005, the increased volume of papers that ITcon published also generated a greater interest in spontaneous submissions. However, part of the rise in 2005 can also be explained by the merger with the International Journal of IT in Architecture, Engineering and Construction (IT-AEC).

IT-AEC was a competing journal of ITcon that started publishing in traditional format in 1999 but could never establish a strong enough subscription base that would guarantee its survival. The two journals merged in 2005 adopting ITcon's open access publication model that had proved to be more resilient.

ITcon now seems to have stabilised on publishing around twenty-five papers per year, which is equivalent to a typical quarterly scholarly journal. This can be compared to a study of open access journals (Hedlund et al. 2004) which indicated that the average number of articles published yearly in open access journals was twenty and the median sixteen.

In our experience this number of papers is the upper limit of what can be sustained using the current management and operation mode. The total amount of working time that the editors and the editorial assistance devote to the journal is around six to eight months a year. To this should be added the time spent by guest editors and reviewers. If the volume of submissions was to increase significantly from the current level of around fifty a year, this would put too much strain on the editorial team. Either some paid work force would be needed, the active team would need to be enlarged, or some form of rotation of editors would need to be established. In our field author payments are not a realistic option.

The Web has made possible new ways of organizing the review process; for instance, where manuscripts are published immediately and structured feedback from readers is used as a peer review, which, after a period, promotes the better articles to peer reviewed status. An example of such a journal is the Electronic Transactions on Artificial Intelligence (Sandewall 1997). In the case of ITcon we decided to adopt a traditional blind, peer-review process. Because of the relatively small size of the research community, double-blind review was not chosen, since masking the authors would often be very difficult.

In the planning stage there was a discussion whether the journal should adopt a more hypermedia-like layout in order to take advantage of the possibilities offered by the Web. In the end we opted for a traditional look, so that a paper that is printed out looks exactly like any normal journal paper. This has also made the processing of the articles easier, in particular after we dropped the HTML-version, which was used in the first few years. This decision was based on a clear trend showing that practically each user who had a look at the HTML version of a paper also downloaded the PDF version but not vice versa. We believe that the readers were using PDF as the preferred format for printing, for the local archiving of the paper and for potential re-distribution of the paper to their colleagues or students.

One of the main reasons for making rather conservative decisions regarding the review process, publication format and content itself was that, by conforming to existing conventions, it would be more likely that the journal would acquire comparable prestige and status of the competing, print journals. This policy, however, did not stop the journal from accepting rich multimedia content and software as appendices and, since the costs of storage are negligible, ITcon never made any limitations on the size of the articles or number of graphical elements.

We chose not to publish issues, since we believed one of the greatest advantages of electronic-only publishing was the possibility of publishing an article without any delay once it was ready. In hindsight it appears that the decision was correct since it has reduced the publication delay to the absolute minimum. Also, it could be claimed that the periodical e-mail messages we send to registered readers (more than 800 at present) form virtual issues, since we try to send them four to five times a year, rather than for each published paper.

The evolution of the ITcon's technical platform followed well the general evolution of providing content for the world wide Web. Since its inception in the early 1990s the Web was believed that it would allow anyone to publish, bypassing the few professional publication channels and get their ideas out on the open. However, the early Web, made up of httpd servers and documents in HTML code fell short of this promise. It was far from trivial to set up a Web server, create HTML pages and save them to proper folders on the server so that they could be read by the users on the internet. But at the time of setting up the first ITcon volume in 1995/96 not much else was available.

The first generation ITcon pages were made with a text editor (e.g., Microsoft's Notepad, the Vi editor, or Emacs) and saved on the Web server. The papers were written in Word, converted to RTF, the RTF was then converted to HTML with a free tool, then the HTML was post processed by a script created specially for ITcon. The script made sure that the formatting was consistent, checked the internal citations and made hyperlinks to figures and references. The author of the script was also its sole user and due to a small number of papers published at the time, the software was also continuously updated. The technical part of the publishing had to be made by a person very knowledgeable in the technical details of http, HTML and scripting languages. Here too ITcon was quite conservative, typically asking users to have not the latest but a generation or two old Web browsers. Tables, for example, were introduced into the ITcon layout in 1997.

The second generation of ITcon came with the departure from Notepad and Vi and the beginning of the use of Web site editing suites such as Microsoft FrontPage and Macromedia Dreamweaver. These packages allowed users to create not only single Web pages but whole Web sites using a visual editor and an interface similar to that of a word processor. After the suitable templates are generated, the work of setting up pages could in principle be left to secretarial staff. It was still helpful, however, for the person setting up pages, to have some knowledge of HTML and understanding of servers, so an ideal profile for doing the technical tasks in ITcon would be a graduate level student with a solid background in Web technologies. At about this time the HTML version was dropped, because Word and the RTF that it was creating was less and less compatible with the RTF conversion tool and the subsequent scripts. The in-built HTML output of Word was impractical to use, because the resulting HTML was polluted with formatting rather than the structural tags. The victim of the dropping of the HTML version and the script that created it was the strict, computational checking of the references and their integrity. This has been left to the human interpretation.

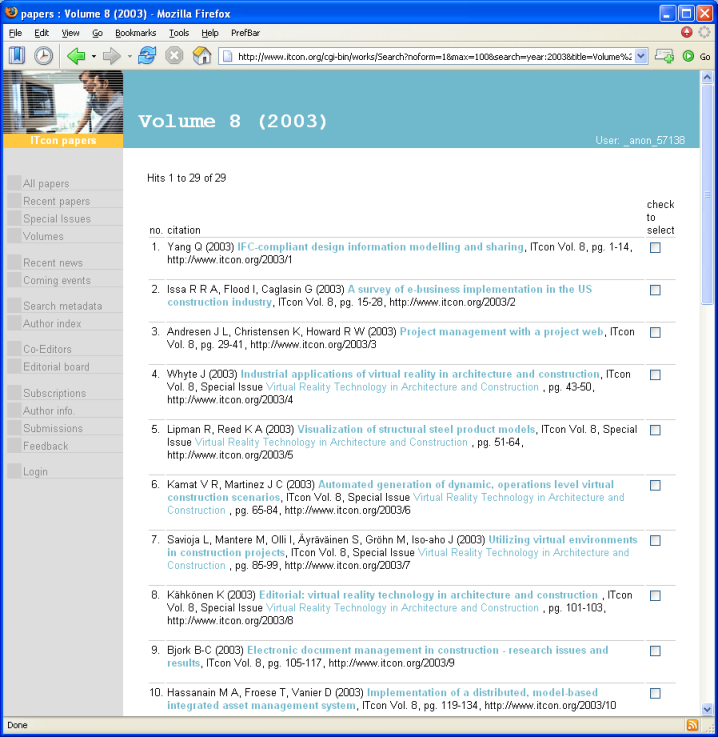

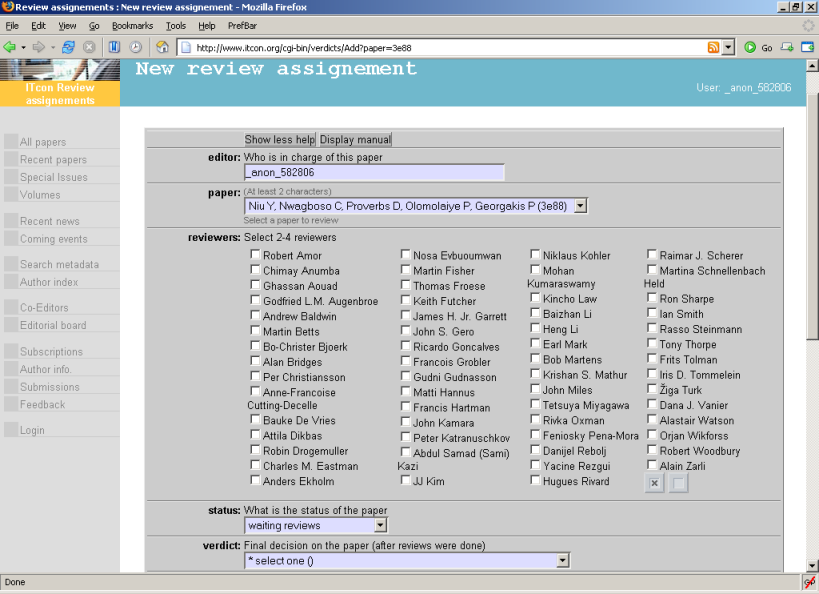

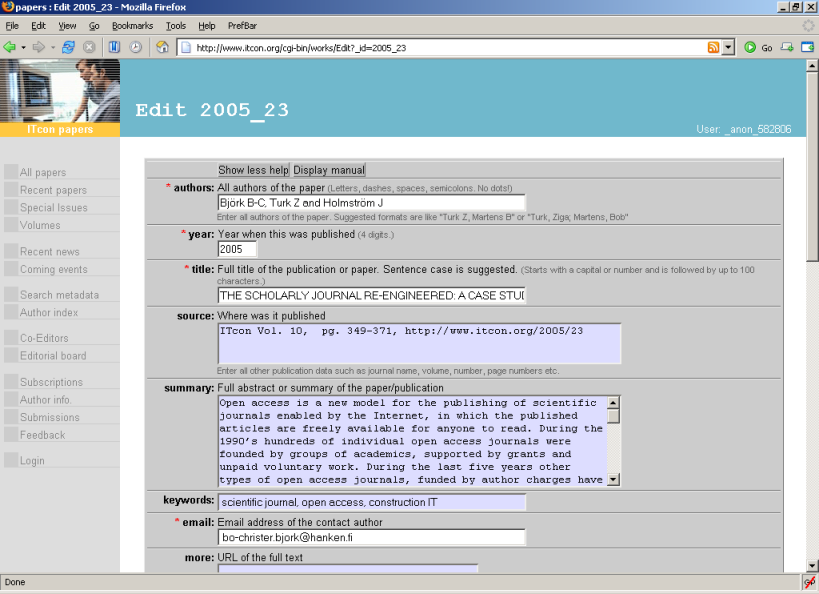

In 2002 the two organizations that were at the core of publishing ITcon and a handful of other partners were awarded an EU project called SciX . The project dealt with various issues related to open access. One of the results of this project was the SciX Open Publishing Platform (SOPS), which provides support for organizing conferences and publishing proceedings, various archives and also journals. At the core of the platform is a content management system that uses a database to store the information and scripts to display the information to the users. The system is initially set up by an IT expert but afterwards all publishing activities are made through a Web interface. Setting up papers, changing pages etc. becomes as easy as filling in a form. Most of day-day technical tasks for running the journal can now be entrusted to a secretarial staff.

Since 2003 ITcon is increasingly using this system, not only to publish papers but also to manage the workflow of submissions, reviewing and revising the manuscripts.

With the growing complexity and the number of features we expected that the size of the PDF files would be growing over the years, but this has not been the case. The median size of the PDF files has been about half a megabyte with the largest files being up to six megabytes long.

At two stages we have tried to make systematic studies which help us in defining the strategy for ITcon as well as provide results that have a value in themselves. In 2000 we conducted a Web survey with researchers in our domain, to which 236 construction IT researchers responded (Björk and Turk 2000). At that time these researchers already downloaded around 50% of all the material they read from the Web.

In the domain of the listed construction IT and general IT journals, ITcon shared second place in the answers to the question which journals the researchers followed most (a non-construction-specific IT journal ranked highest). This confirmed that we had a readership on a par with the best competing journals in our field.

The study also provided more robust evidence that researchers as readers heartily welcome Open Access journals but that they are reluctant to submit their best work to them, due to career advancement aspects. This we were already learning the hard way. Later studies have more or less confirmed this situation (Swan and Brown 2002, Rowlands et al. 2004).

In 2005 we carried out a benchmarking exercise, comparing ITcon with some of its competitors concerning qualities such as publishing delay, acceptance rate etc. The benchmarking methodology itself is more fully explained in a forthcoming article (Björk and Holmström 2006) and the more detailed results concerning ITcon have been reported earlier (Björk et al. 2005).

Journals traditionally tend to be benchmarked only concerning prestige and using more objective methods involving citation counting. We wanted also to bring out into the open factors such as publishing delay (from submission to publication), average acceptance rate, and number of readers. These are factors that authors would find very useful when deciding where to submit, but which seldom are publicly available.

One of the factors we could measure for all the seven construction IT journals (of which all except one claim to be international) was the regional spread of authorship. This could be done through a rather tedious summation from author affiliations posted with the articles. The analysis revealed that ITcon had perhaps the most globally balanced distribution of all the journals, with a clear leadership concerning North European authors. We also studied the compositions of the editorial boards of the journals, and found considerable overlap, with three of the journals, including ITcon, forming a clear cluster (IT-AEC, merged with ITcon in 2005 would have formed part of the cluster).

Shortening the publishing delay was from the start one of the major motivations for establishing an open access journal. The average delay of ITcon over its history has been 6.7 months, and this compares very favourably with the two other journal we were able to make calculations for (18.0 and 18.9 respectively). As a comparison Raney (1998) reported a 21.8 month delay for the Transactions on Geoscience and Remote Sensing.

The overall acceptance rate of ITcon was 55%. We were able to get published statistics for one of the other construction IT journals (47%) and also for the most highly ranked construction management journal in our 2000 study (51%). These figures can be compared with some recent figures according to which the average acceptance rate for the subscription-based journals published by members of the Association of Learned and Professional Society Publishers was 42% (Kaufman-Wills Group 2005). The average for DOAJ-indexed open access journals was 55%, if one excludes two large biomedical open access publishers (ISP and BioMed Central).

It is very difficult to get figures on readership. Journal paper circulation was in the old days a reasonable proxy, and the number of institutional and private subscriptions could give good hints. However, these are figures that publishers are reluctant to reveal for low-circulation journals (which most construction journals are), because they could make potential authors hesitate to submit their papers.

Of course, the best way of comparing readership would be to make a broad survey of researchers in the field, but this could be a study in its own right. A way around the dilemma is to measure the relative subscription prices (institutional electronic subscriptions) for the different journals. The differences were quite staggering, a range from seven to thirty-three Euros an article. There are only insignificant quality differences between the journals, in fact the lowest priced one has the highest impact factor of the three that are indexed by the ISI. The conclusions we draw is that the higher the price, the lower the number of subscribers and hence readership, are likely to be.

For the particular case of ITcon it was possible to get quite accurate figures based on our Web-server log files.This proved to be a non-trivial task. Since ITcon is a totally freely accessible Website that does not ask for user names and passwords, it is not possible to get the detailed data that a subscription-based system could provide. Also, the lack of this username and password barrier makes it quite hard to make a distinction between Web robots and genuine human users. Our experience would seem to indicate that gross Web access statistics are very unreliable as indicators of real readership and greatly overestimate the number of readers.

We have found out that typical log analysis software like Analog removes only about a third of the robot accesses. The reason, we believe, is that, in addition to well known robots like Google, Yahoo! or Inktomi, there are also a number of local or domain-specific robots. These are not generally known and may also not behave as politely as the big ones. They had to be removed manually so that (1) all clients that reported a 'bot' as a user agent or their IP number or Web address is well known to be that of a robot were removed, (2) all clients that made a request to robots.txt were removed, (3) all clients that made a request to a robot trap designed to capture robots in SOPS were removed, (4) all requests that were just comparing their cached version with the version on our Website were removed. The latter also removed users reading an article again or students reading an article from the same workstation.

The resulting statistics were discussed by the authors in a separate paper (Björk et al. 2005). On average the journal delivers some 12,000 pages on a weekday and 7,500 on a weekend. The largest drivers of its traffic are the search engines Google and Yahoo!.

In addition to journal articles, researchers in our domain publish even more conference papers. According to our study of construction IT researchers, they publish on the average 1.6 journal articles and 2.6 conference papers per year (Björk and Turk 2000). Looking at it from a information management perspective, getting access to these conference papers can be even more difficult than retrieving journal papers. Often, the conference proceedings are published by universities and the after-sales are minimal. A researcher finding a reference to an interesting conference paper often has great difficulties in retrieving it, unless he himself or a colleague next door who attended the conference has a copy of the paper proceedings on his bookshelf.

In order to do something about the situation some of the key ITcon team, together with other colleagues managed to get funding from the EU for an R&D project (SciX) which had as primary aim the setting up a subject-based, e-print repository for construction IT papers (Martens et al. 2003). This repository, ITC Digital Library , which has been built on the same software platform as ITcon, is now functional and currently contains a little over 1000 papers. Some of the older papers have been scanned in from paper proceedings.

Setting up a self-sustainable process of continuously filling the repository with content, after the initial bulk input which could be done within the SciX project, was one of the aims of the project. In this respect the repository has not fully achieved its aims. The key reason for this has been the reluctance of a number of key conference organizers to allow the Open access posting of their proceedings, even after a delay, in the repository. This is for a number of reasons, such as smoothly running partnerships with commercial publishers, fear of losing membership and paying conference attendees if papers were made freely available, or copyright restrictions imposed by the professional societies under which sponsorship the conferences are organized. Thus, currently, the database contains all the papers of one major conference series since 1989 as well as the papers of a minor conference. Additionally there are individually uploaded papers but, on the whole, too few researchers upload their own papers on their own initiative. The lessons to be learnt from this is that strategic partnerships with conference organizers, marketing, and initial critical mass are central to building a sustainable repository of this kind.

Currently the interest in publishing conference proceedings openly on the Web is on the rise and ready solutions such as the ITC repository could prove very valuable as technical platforms.

Open access journals that operate using an open-source-like business model (where the costs are absorbed by the universities of the editors and other staff) can be successful, but only under quite restrictive border conditions. The situation is thus partly the same as in the case of open source software projects, which seem to work well in areas which catch the interest of very skilled programmers and not at all in other areas. The type of business model that ITcon uses works well for journals with a low to medium number of submissions and published papers, and where the demands like copy-editing, graphical art work etc., which would necessitate paid workers, are not too stringent. It also works well for journals published in other languages than English, which have difficulties in obtaining scattered paying subscribers abroad, and journals published in developing nations.

On the more technical level our experience is that readers are fully content with the PDF versions of papers that look like ordinary papers. One of the reasons might be that due to the rapid change-over from paper issues to electronic licenses also for subscription journals they are getting used to printouts as the standard reading mode.

Open access journals as a whole have often been criticised for low standards of reviewing, low numbers of published papers etc. One way to answer such critiques would be to be more open about certain parameters of our journals. This could for instance be done by publicly posting data about publications delays, acceptance rates, real Web downloads etc. on the journal Web sites. An indexing service such as the Directory of Open Access Journals could also start collecting and publishing standard measures, which would enable more comprehensive benchmarking. And perhaps some sort of quality certification systems for peer-reviewed open access journals, which, among other things, would require openness about key parameters, could be envisaged.

| Find other papers on this subject. |

|||

© the authors, 2006. Last updated: 3 March, 2006 |